Вавада казино регистрация на зеркале

Пользователи в режиме онлайн продолжают открывать сайт казино вавада, регистрировать профили и получать все преимущества на платформе для азартных развлечений. Оператор принимает ставки на азартные развлечения легально во многих странах с 2017 года, совместно с релизом компания получила лицензию Кюрасао – самого популярного регулятора в сфере гемблинга в интернете.

Компания дает много выгодных и необходимых для успешных сессий кейсов, начиная с оформления своей главной страницы. В верхней части посетителей встречает логотип бренда, опции для открытия игрового профиля и входа в личный кабинет. Разработчики поставили крупные баннеры, где идет информация относительно приветственного бонусного предложения, турниров, розыгрышей призов и джекпотов и другие полезные функции.

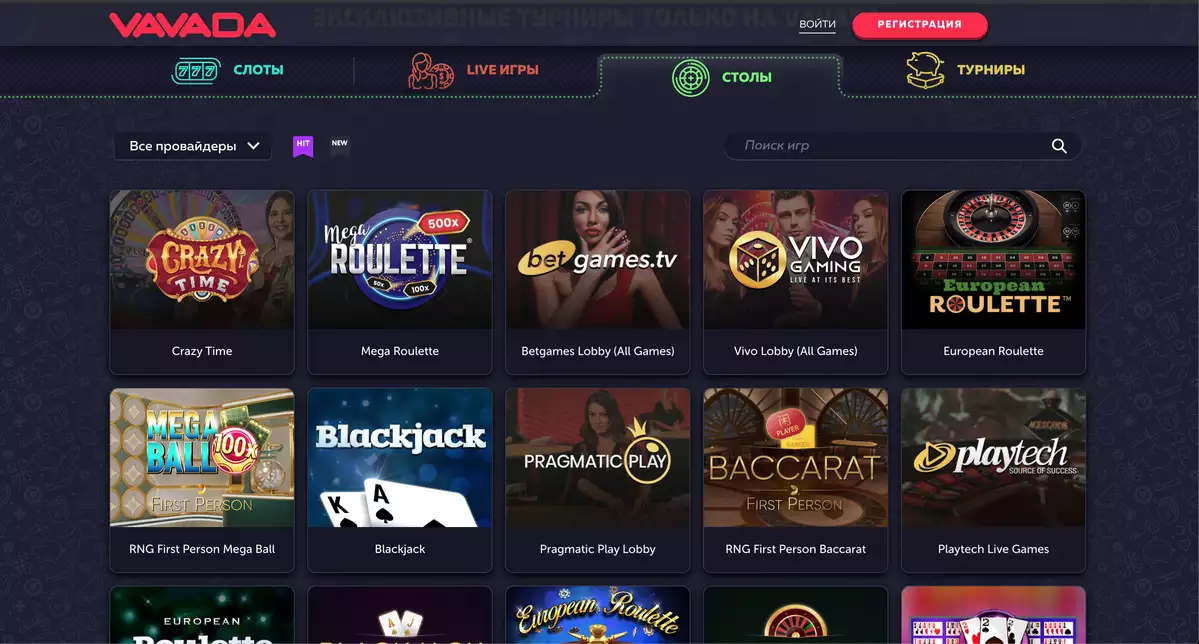

Сразу после баннеров увидите игровое меню из четырех опций, выбираете из следующих решений:

- Игровые автоматы. Компания на сегодня сотрудничает с 44 известными разработчиками программного обеспечения и регулярно увеличивает линейку партнеров и автоматов. Вы поиграете в слоты вавада – более 4500 тайтлов на все популярные жанры и тематики.

- Лайв казино. В live решения включены популярные лобби от пяти известных провайдеров – Pragmatic Play, Playtech, Vivo Gaming, Evolution и Betgames. Ставите на рулетку, монополию и другие популярные лайвы.

- Столы. В столы казино включены ставки на классические решения – блэкджек, рулетка и покер. В этом разделе также отображаются аркады.

- Турниры. Компания раздает фриспины, зачисляет крупные призовые и денежные средства на балансы участников.

После ассортимента для ставок и развлечений представлен футер с опцией перехода на все актуальные способы связи с представителями службы поддержки. В нижней части vavada casino найдете переключатель языковой версии интерфейса, действующие группы клуба в социальных сетях, принимаемые способы платежей, ссылки на правила и ответственную игру.

| 🕹️ Игровая платформа | Вавада |

| 🎯Дата открытия | 13.10.2017 |

| 🎰 Топовые провайдеры | NetEnt, Igrosoft, Novomatic, Betsoft, EGT, Evolution Gaming, Thunderkick, Microgaming, Quickspin |

| 🃏Тип казино | Браузерная, мобильная, live-версии |

| 🍋Операционная система | Android, iOS, Windows |

| 💎Приветственные бонусы | 100 фриспинов + 100% к первому депозиту |

| ⚡Способы регистрации | Через email, телефон, соц. сети |

| 💲Игровые валюты | Рубли, евро, доллары, гривны |

| 💱Минимальная сумма депозита | 50 рублей |

| 💹Минимальная сумма выплаты | 1000 рублей |

| 💳Платёжные инструменты | Visa/MasterCard, SMS, Moneta.ru, Webmoney, Neteller, Skrill |

| 💸Поддерживаемый язык | Русский |

| ☝Круглосуточная служба поддержки | Email, live-чат, телефон |

Зеркало официального сайта Vavada casino

Сайтам, предлагающим азартные развлечения в режиме онлайн, важно иметь актуальные альтернативные доступы на свои услуги, поскольку действуют законы против гемблинга. Интернет-провайдеры закрывают доступы на площадки для ставок на игры казино, клиенты не имеют возможность попадать на проекты и заходить в личные кабинеты к своим денежным средствам. Одной из лучших альтернатив называют зеркало вавады, которое обеспечивает пользователям и зарегистрированным гемблерам стабильный вход на услуги казино и всевозможные опции для размещения ставок. На сегодня есть несколько способов узнать актуальные рабочие ссылки:

- Написать в саппорт. Если у вас есть возможность входа на основную версию онлайн заведения, то советуем в правом верхнем углу экрана нажать на знак вопроса (в мобильном браузере) или на слово «помощь» (с ПК), чтобы запустить переписку с лайв чатом. Обязательно заберите действующие URL-адреса на зеркало.

- Получать рассылку. Обязательно воспользуйтесь опцией получения сообщений от администрации на свою электронную почту. Этот кейс не пользуется популярностью у множества гемблеров, которые позже остальных узнают о последних нововведениях на выбранной ими платформе для ставок. Нередко в рассылках пользователям дают свежую информацию относительно vavada зеркало.

- Зайти в социальные сети. Внизу главной страницы найдете все логотипы известных социальных сетей, на ресурсах которых у клуба есть активные группы и крупное комьюнити гемблеров. В соцсетях часто публикуют рабочие ссылки, такие сообщения закрепляют в качестве главных, чтобы у посетителей и участников группы был быстрый доступ на эту функцию.

- Открыть партнерские ресурсы и стримы. Компания пользуется большим спросом в мире, регулярно находится в сфере обсуждения известных гемблеров и стримеров. На их платформах для стриминга часто публикуются рабочие ссылки.

Регистрация на зеркале вавада

родолжая тему, вавада рабочее зеркало предлагает бетторам аналогичный главной версии пользовательский опыт – начиная от оформления и минималистичного дизайна, завершая всеми внутриигровыми возможностями. По этой причине, альтернативные доступы клуба имеют огромный спрос на рынке. С созданием учетной записи вы откроете для себя мир огромных возможностей на хорошие заносы. Процесс открытия профиля начинается после клика на слово «регистрация», которое размещено в правом верхнем углу площадки и выделено красным цветом. После нажатия система предоставит новый интерфейс и анкету для заполнения следующей информации:

- Логин – указываете на свой выбор либо рабочий номер телефона, либо действующий email-адрес;

- Пароль – рекомендуется использовать длинное значение, указывать цифры, заглавные буквы;

- Валюта – нажимаете на опцию рядом и в открывшемся списке выбираете денежную единицу вашего игрового счета (рубли, тенге, евро, доллары и другие мировые валюты);

- Согласие с правилами – без указания галочки напротив этой функции система не позволит вам зарегистрировать профиль.

Еще раз нажимаете на слово «регистрация» под анкетой и создаете игровой профиль, но этот процесс еще нужно подтвердить – либо получаете СМС на номер телефона, либо письмо на электронную почту с условием перехода по указанной ссылке. Регистрация в ваваде и вход в личный кабинет открывает новым участникам отличные и выгодные возможности на быструю оптимизацию профиля (добавление/обновление личных данных), удобный интерфейс для финансовых операций по депозитам и выводам, легкое включение подарков от клуба в разделе «бонусы», запуск лайв чата одним кликом и просмотр пользовательского статуса в рамках программы лояльности.

Выделяем нюанс относительно последующих действий после регистрации пользователя – вам не нужно в обязательном порядке проходить проверку личности. Бренд оставляет процесс на личное решение гемблера, но если заподозрит нечестную игру, мультиаккаунтинг и другие негативные кейсы, то запустит со своей стороны верификацию.

Список рабочих зеркал Вавада

Турниры Вавада

На сегодня компания предлагает огромный выбор ставок в игровых автоматах, лайв казино и столах с аркадами. Первая опция со слотами участвует в конкурсах и считается квалификационной опцией для размещения ставок. В турниры vavada включены топовые решения с легкими условиями для входа. На регулярной основе бетторы участвуют в следующих типах соревнований.

- На фриспины. Данная линейка турниров предлагается нескольким сегментам гемблеров, согласно их текущему статусу на площадке – бронзовые, серебряные, золотые и платиновые. Турниры проходят ежедневно, призовой фонд доходит до 25 тысяч долларов, а для участия у игрокам предоставляют бесплатные вращения, которые далее можно докупать из собственного реального баланса.

- На максимальную ставку. В этом типе турнира участвуют все зарегистрированные пользователи казино, тут не учитывают конкретный статус. Призовые составляют 50К$, для получения самой сочной доли фонда вам нужно поставить общую сумму бетов больше, чем другие участники.

- Х-турниры вавада. В данном типе соревнований всегда устанавливают наибольший призовой фонд – 60 тысяч долларов, вход в турнир открыт всем пользователям с активным игровым аккаунтом. Минимальная сумма ставки для квалификации установлена всего в 0,15$.

В практике заведения часто встречаются масштабные промо-события. В качестве примера стоит вспомнить 2023 год и два грандиозных розыгрыша ценных призов. В первой серии турниров компания разыграла несколько десятков тысяч долларов, а также автомобили Мерседес. Ближе к новому году счастливчики успели забрать автомобили BMW.

Лучшие слоты на Вавада

| 🔥 Бездепозитный бонус: | 100 фриспинов |

| 💻 Официальный сайт: | vavada.com |

| 🎲 Тип казино: | Слоты, Столы, Live, Турниры |

| 🗓 Рабочее зеркало: | Есть |

Мобильное приложение Vavada

При желании вы можете зайти на сайт казино с мобильной платформы – есть две опции. Первая – мобильная версия через любой браузер. Элементы платформы расположены в идентичном порядке в соответствии с ПК, но с оптимизацией под размеры экрана устройства. Вторая – приложение вавада, которое компания разработала для установки на смартфоны с операционками iOS и Android. Чтобы скачать программное обеспечение, вам нужно написать в службу поддержки и получить рабочие ссылки.

После установки файла на смартфон предоставляете системе вашего устройства все разрешения и начинаете играть в online casino со своего девайса. В официальном приложении от заведения действует максимальная оптимизация сложных игровых автоматов, быстрое обновление в лайвах и столах, а также функция обхода блокировок, то есть вы получаете доступ к аккаунту и денежным средствам несмотря на все негативные факторы.

Бонусы

На сегодня бонусная программа компании рассчитана всем новичкам и действующим клиентам. Есть подарки за регистрацию, пополнения баланса, возвраты за проигранные ставки и регулярные розыгрыши полезных опций. Ниже рассказываем подробности каждого актуального предложения.

Приветственный бонус

Vavada casino бонусы начинаете получать, как только присоединитесь к платформе в качестве зарегистрированного пользователя. После первого входа в личный кабинет пользователи могут зайти в раздел «бонусы» и активировать бездеп на 100 фриспинов. Все вращения необходимо выполнить в игровом автомате Great Pigsby Megaways от компании Relax Gaming с правилом отыгрыша 20х.

Если делаете первое пополнение счета, то автоматически становитесь участником второй части приветственного бонуса – 100% на первый депозит. Включаете подарок до 1000 долларов с вейджером 35х. На обе части welcome-предложения клуб установил срок отыгрыша в 14 дней.

Кэшбэк-бонус

За проигранные ставки в начале каждого месяца официальный сайт вавада зачисляет возврат денежных средств. Для получения максимальных десяти процентов кэшбэк-бонуса статус клиентского аккаунта должен быть на уровне «платиновый». Сумма возврата рассчитывается следующим образом: в подсчет идут все проигрыши и выигрыши за предыдущий месяц, процент берут из отрицательного показателя. Подарок от клуба подлежит отыгрышу 5х.

Бонус на день рождения

Входите в личный кабинет, заполняете личные данные и дату рождения – так вы квалифицируетесь на получение особого подарка от заведения. В день рождения клиента вы получаете фриспины вавада, которые необходимо покрутить 50 раз в игровом автомате Maya Mystery с обязательным условием вейджера 5х.

Кроме стандартного набора, указанного выше, вы получаете отличные шансы активировать промокоды, разыгрываемые в социальных сетях. Бонус коды клуба также можно забрать в рассылке на почту и у саппорта в чате.

Служба поддержки Вавада

На сегодня техподдержка вавада обеспечивает качественную и оперативную помощь как всем посетителям без игрового аккаунта, так и всем зарегистрированным гемблерам. Круглосуточный саппорт работает в лайв чате. Представляем все доступные методы коммуникации с представителями онлайн казино:

- Лайв чат. В общении с live чатом получаете поддержку в режиме 24/7.

- Электронная почта. Если желаете направить сообщение на почту, то переходите по ссылке «написать нам» и видите актуальный email-адрес.

- Номер телефона. Среди контактных данных также найдете номер телефона компании.

- Социальные сети. Есть опция связи с саппортом по скайпу. ВИП-игроки с топовыми статусами также получают поддержку личного менеджера в любом выбранном гемблером мессенджере.

Лицензия vavada online casino

Сайт вавады получил лицензию Кюрасао с момента появления на рынке онлайн гемблинга – с 2017 года. Лицензиат – важная составляющая надежного процесса ставок для пользователей. На такой площадке они не будут сомневаться в честности предлагаемых игровых решений, в своевременном получении денежных выплат за выигрыши и безопасность личной информации. Представляем дополнительные преимущества заведения и почему его выбирают для создания аккаунта.

- Регистрация. Начнем именно с регистрации – за пару действий и без требований по проверке личности.

- Игры. Получаете официальные интеграции в виде 4500+ слотов (постоянные новинки и пререлизы), десятки live casino и столов, бесплатные аркады и другие топ-развлечения.

- Джекпоты. В любой момент пользователь может стать обладателем одного из трех уровней джекпота вавада – минор, мэджор и мега.

- Партнерская программа. Платформа дает гибкие возможности и все необходимые материалы для привлечения новых гемблеров с вашей стороны. Вы получаете комиссионные за каждое действие реферала в рамках схемы CPA, а также претендуете на долгосрочные проценты от казино за постоянный гемблинг приведенного вами клиента (RevShare).

- Платежи. Компания обеспечивает быстрые выплаты криптовалютой и на электронные кошельки. Естественно, также обрабатываются стандартные переводы онлайн банкингом.

- Ответственная игра. В футере страницы найдете положения по ответственной игре, которые помогут вам правильно размещать ставки на азартные развлечения.

FAQ

Кто владелец казино Вавада?

Официальный сайт vavada казино принадлежит и управляется Vavada B.V, более шести лет оперирует в индустрии онлайн гемблинга с лицензией Кюрасао – самого популярного регулятора.

Как найти Vavada?

Открываете любой поисковик с ПК или смартфона, пишете vavada com и переходите на официальную версию заведения. Если она заблокирована провайдером, то система переведет вас на рабочее зеркало вавада казино на сегодня.

Как пополнить счет на Вавада?

Для пополнения счета можете воспользоваться переводами с банковских карточек Visa/MasterCard, а также сделать транзакции с электронных кошельков. Многие пользователи давно применяют криптовалюту на этой платформе.

Какие есть игры в онлайн казино?

На ваш выбор заведение дает два игровых режима (демо и на реальные деньги) в слотах и аркадах. Вы также имеете возможность активировать самые популярные тайтлы в лайв казино и зайти в классические столы по блэкджеку, рулетке и другие.

Отзывы

-

Открыл профиль ради партнерки. Что могу выделить – сразу стали предлагать помощь в продвижении и привлечении клиентов, это реально плюс казино. Выплаты стабильно вывожу каждый месяц, еще ни одной задержки не встречал.

-

Очень непростые условия для отыгрыша приветственного бонуса. Это сколько нужно играть, чтобы 35х поставить всю сумму первого депозита? Нужно пересмотреть некоторые положения, а то толку никакого нет от этого бонуса.

-

Мне понравился раздел слотов, никаких лишних настроек, что нужно – вижу. Новинки отмечаются, пре-релизы – также выделены, если хочешь поиграть в популярные слоты, то выбираешь в фильтрах. Все продумано в навигации!

-

Недоволен временем ответа саппорта. Написал в лайв чат днем, понимаю, что может быть много запросов от других игроков, но ждать более 30 минут ответа – слишком много!

-

Акции и бонусы – стандартные, не меняются. Реально хотелось бы забирать больше бонусных предложений. Однако, в некоторой части их компенсируют постоянными турнирами и джекпотами, но все-таки чего-то не хватает на этом казике.